The Sixth Sense of Business Processes

Your business processes have a voice. QPR ProcessAnalyzer shows how they actually work: every step, every delay, every hidden inefficiency, so you can improve performance and achieve real business impact.

Visionary in the 2025 Gartner® Magic Quadrant™

QPR Software has been recognized as a Visionary in the 2025 Gartner® Magic Quadrant™ for Process Mining Platforms — for the third consecutive year!

Instead of scratching your head, how about knowing exactly?

Every business process leaves a trail – a digital footprint waiting to be understood. Within those footprints lies the ugly truth: not how your business is drawn in a manual, but how it lives and breathes in real time.

Hear what your processes are telling you

See how your processes truly run – not just how they were designed.

From bottlenecks to breakthroughs. Supercharged by AI.

Get AI-powered insights that show inefficiencies, give explanations, and guide you to better results.

A process bottleneck saved is a million earned

Find and fix slowdowns in your processes – save time, cut costs, and boost performance.

The best value for your money

Customers have selected QPR ProcessAnalyzer because it provides enterprise-wide process mining at a fraction of the cost – with unique Snowflake-native capabilities and customer-friendly terms.

AI-Powered Root Cause Analysis — A Breakthrough in Process Mining

Understanding why a process behaves the way it does has traditionally required experts, time, and manual deep dives.

Our new innovation AI-Powered Root Cause Analysis (RCA) changes that by instantly revealing the real drivers behind any process phenomenon:

- Instant clarity: Move from symptoms to true causes in seconds — automatically.

- Multi-factor intelligence: AI uncovers combinations of influencing factors and identifies real cause-and-effect relationships.

- Effortless insight: Minimal manual work, maximum decision-making speed.

Why QPR ProcessAnalyzer stands out

- Superior Process Mining Capabilities: 100+ ready-made analyses, automated AI findings, no-code customization, powerful filtering, one-click AI-powered root cause analysis, dynamic event mapping, and advanced Object-Centric Process Mining (OCPM) for analyzing complex, multi-object processes.

- Built for Snowflake – Globally the only native process mining solution, with unlimited scalability, enterprise security, and real-time speed.

- Instant deployment – Available as a native app on Snowflake Marketplace, up and running in minutes.

- Works seamlessly with Snowflake or any other data source.

- AI-powered – Natural language queries and automated recommendations for process optimization.

- Enterprise-grade performance with better TCO – Full enterprise-level capabilities without the heavy infrastructure, implementation, or operating costs typically associated with traditional process mining platforms.

See QPR ProcessAnalyzer in action

Discover hidden process bottlenecks, improve performance, and make confident decisions in real time.

Key capabilities of QPR ProcessAnalyzer

Uncover the real flow of your processes with automatically generated, interactive process maps. Drill down into details or zoom out for high-level trends.

Detect inefficiencies, bottlenecks, and process variations that have the greatest impact on cost, speed, and performance.

An advanced, AI-driven capability that not only reveals what happened, but uncovers why — automatically segmenting multiple influencing factors and identifying true cause-and-effect relationships behind business process inefficiencies in seconds.

Enhanced by Snowflake’s machine learning capabilities.

Accelerate insights with 100+ pre-built analyses designed for fast process improvement.

Connect all your data sources effortlessly with QPR Connectors and unify siloed data for complete visibility.

Transform analysis with enterprise-grade AI: ask questions in plain language with the AI Assistant, get automated recommendations from the AI Agent, and predict outcomes with ML-powered forecasts. Powered by OpenAI and Snowflake Cortex.

Gain a 360° view of complex operations with native OCPM. Analyze multiple processes simultaneously and see how products, customers, suppliers, and other objects interact across your business. Recognized by Gartner as an advanced capability.

Stay ahead with real-time alerts on KPI breaches and business rule violations.

Discover and prioritize the best automation opportunities, benchmark automation levels, and simulate business impact.

Instantly generate compliant BPMN 2.0 diagrams from event data with just one click. The system intelligently identifies and maps real-world process flows, including parallel and exclusive gateways, to provide a factual baseline for process design.

We ensure the best experience for enterprises

Scale process mining across your entire organization without hidden costs. One license covers multiple processes — enabling true enterprise-wide usage.

Enterprise-grade security and governance with full role-based access control. Certified by ISO 27001, ISO 9001, and TISAX — a rare achievement in process mining.

Analyze billions of rows in seconds — the first and only process mining solution running natively on Snowflake. Fast, secure, and cost-efficient.

Deploy QPR ProcessAnalyzer instantly as a native Snowflake application. Simplify procurement, accelerate onboarding, and keep your data in place.

Buy QPR ProcessAnalyzer through AWS Marketplace to streamline procurement and centralize billing — and use your existing AWS commitments.

Leverage our worldwide partner ecosystem to get local expertise and support wherever you operate.

Access a comprehensive set of courses and certifications to maximize your process mining success.

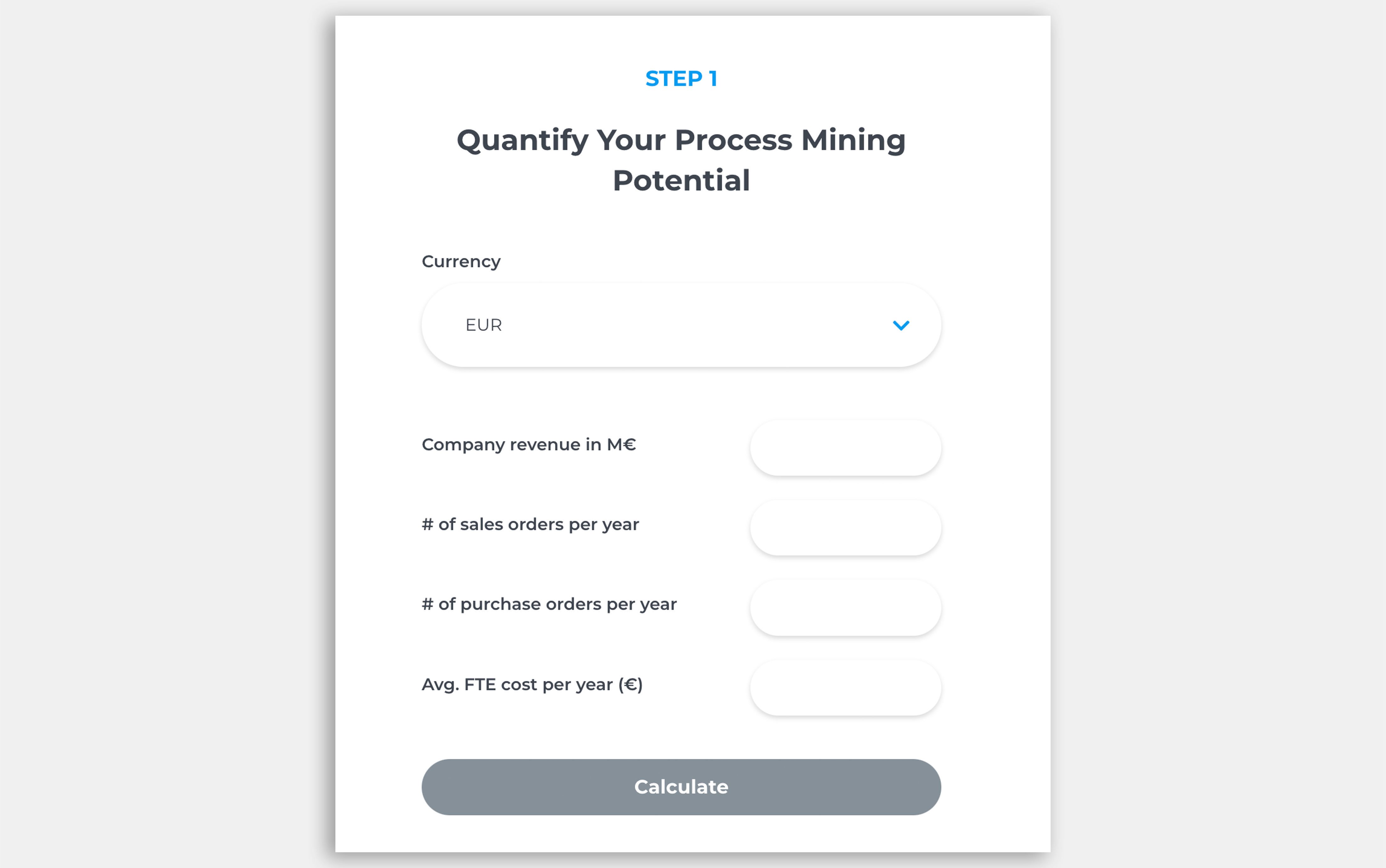

How much can you save with process mining?

Discover the savings potential of process mining in minutes. Our ROI Calculator gives you fast, tailored insights based on your company’s data.

See how leading companies drive change

Wärtsilä transformed their internal audit approach with QPR ProcessAnalyzer

"We get total visibility to our processes and data, there’s no longer a need for extrapolation and assumptions based on sampling."

Metsä Group eliminates uncertainties through continuous improvement

"The process insight and facts delivered by QPR ProcessAnalyzer were priceless. We were immediately able to focus our process improvement activities on the right things to achieve the results our business needed. Not wasting time on trial and error.”

Piraeus Bank cuts lead times by 86% through unprecedented process transparency

Piraeus Bank locates process bottlenecks and increases efficiency.

"We gave the data to QPR ProcessAnalyzer, and right away, in 5 minutes, we saw the bottlenecks of the process."

Sanofi streamlined their internal job change process with QPR ProcessAnalyzer

“By combining user-centric process design with QPR ProcessAnalyzer, we reduced the median Job Change Process duration from 8.9 to 0.9 days and cut completion times by 90%.”

Seamless connection to your data sources

The business leader's guide to process mining

In this guide, you’ll get a clear introduction to process mining and how it helps enterprises drive efficiency, cut costs, and make smarter decisions.

Take the next step in process excellence.

Untangling Supply Chain Complexity with Object-Centric Process Mining (OCPM)

Process Mining in Financial Services: 5 Key Benefits

Untangling Supply Chain Complexity with Object-Centric Process Mining (OCPM)